Online courses directory (10358)

This course covers matrix theory and linear algebra, emphasizing topics useful in other disciplines such as physics, economics and social sciences, natural sciences, and engineering. It parallels the combination of theory and applications in Professor Strang’s textbook Introduction to Linear Algebra.

Course Format

This course has been designed for independent study. It provides everything you will need to understand the concepts covered in the course. The materials include:

This course has been designed for independent study. It provides everything you will need to understand the concepts covered in the course. The materials include:

- A complete set of Lecture Videos by Professor Gilbert Strang.

- Summary Notes for all videos along with suggested readings in Prof. Strang's textbook Linear Algebra.

- Problem Solving Videos on every topic taught by an experienced MIT Recitation Instructor.

- Problem Sets to do on your own with Solutions to check your answers against when you're done.

- A selection of Java® Demonstrations to illustrate key concepts.

- A full set of Exams with Solutions, including review material to help you prepare.

Other Versions

Other OCW Versions

OCW has published multiple versions of this subject. ![]()

Related Content

This course offers a rigorous treatment of linear algebra, including vector spaces, systems of linear equations, bases, linear independence, matrices, determinants, eigenvalues, inner products, quadratic forms, and canonical forms of matrices. Compared with 18.06 Linear Algebra, more emphasis is placed on theory and proofs.

This is a basic subject on matrix theory and linear algebra. Emphasis is given to topics that will be useful in other disciplines, including systems of equations, vector spaces, determinants, eigenvalues, similarity, and positive definite matrices.

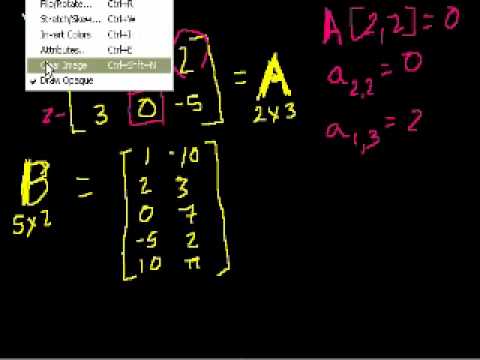

Matrices, vectors, vector spaces, transformations. Covers all topics in a first year college linear algebra course. This is an advanced course normally taken by science or engineering majors after taking at least two semesters of calculus (although calculus really isn't a prereq) so don't confuse this with regular high school algebra. Introduction to matrices. Matrix multiplication (part 1). Matrix multiplication (part 2). Idea Behind Inverting a 2x2 Matrix. Inverting matrices (part 2). Inverting Matrices (part 3). Matrices to solve a system of equations. Matrices to solve a vector combination problem. Singular Matrices. 3-variable linear equations (part 1). Solving 3 Equations with 3 Unknowns. Introduction to Vectors. Vector Examples. Parametric Representations of Lines. Linear Combinations and Span. Introduction to Linear Independence. More on linear independence. Span and Linear Independence Example. Linear Subspaces. Basis of a Subspace. Vector Dot Product and Vector Length. Proving Vector Dot Product Properties. Proof of the Cauchy-Schwarz Inequality. Vector Triangle Inequality. Defining the angle between vectors. Defining a plane in R3 with a point and normal vector. Cross Product Introduction. Proof: Relationship between cross product and sin of angle. Dot and Cross Product Comparison/Intuition. Matrices: Reduced Row Echelon Form 1. Matrices: Reduced Row Echelon Form 2. Matrices: Reduced Row Echelon Form 3. Matrix Vector Products. Introduction to the Null Space of a Matrix. Null Space 2: Calculating the null space of a matrix. Null Space 3: Relation to Linear Independence. Column Space of a Matrix. Null Space and Column Space Basis. Visualizing a Column Space as a Plane in R3. Proof: Any subspace basis has same number of elements. Dimension of the Null Space or Nullity. Dimension of the Column Space or Rank. Showing relation between basis cols and pivot cols. Showing that the candidate basis does span C(A). A more formal understanding of functions. Vector Transformations. Linear Transformations. Matrix Vector Products as Linear Transformations. Linear Transformations as Matrix Vector Products. Image of a subset under a transformation. im(T): Image of a Transformation. Preimage of a set. Preimage and Kernel Example. Sums and Scalar Multiples of Linear Transformations. More on Matrix Addition and Scalar Multiplication. Linear Transformation Examples: Scaling and Reflections. Linear Transformation Examples: Rotations in R2. Rotation in R3 around the X-axis. Unit Vectors. Introduction to Projections. Expressing a Projection on to a line as a Matrix Vector prod. Compositions of Linear Transformations 1. Compositions of Linear Transformations 2. Matrix Product Examples. Matrix Product Associativity. Distributive Property of Matrix Products. Introduction to the inverse of a function. Proof: Invertibility implies a unique solution to f(x)=y. Surjective (onto) and Injective (one-to-one) functions. Relating invertibility to being onto and one-to-one. Determining whether a transformation is onto. Exploring the solution set of Ax=b. Matrix condition for one-to-one trans. Simplifying conditions for invertibility. Showing that Inverses are Linear. Deriving a method for determining inverses. Example of Finding Matrix Inverse. Formula for 2x2 inverse. 3x3 Determinant. nxn Determinant. Determinants along other rows/cols. Rule of Sarrus of Determinants. Determinant when row multiplied by scalar. (correction) scalar multiplication of row. Determinant when row is added. Duplicate Row Determinant. Determinant after row operations. Upper Triangular Determinant. Simpler 4x4 determinant. Determinant and area of a parallelogram. Determinant as Scaling Factor. Transpose of a Matrix. Determinant of Transpose. Transpose of a Matrix Product. Transposes of sums and inverses. Transpose of a Vector. Rowspace and Left Nullspace. Visualizations of Left Nullspace and Rowspace. Orthogonal Complements. Rank(A) = Rank(transpose of A). dim(V) + dim(orthogonal complement of V)=n. Representing vectors in Rn using subspace members. Orthogonal Complement of the Orthogonal Complement. Orthogonal Complement of the Nullspace. Unique rowspace solution to Ax=b. Rowspace Solution to Ax=b example. Showing that A-transpose x A is invertible. Projections onto Subspaces. Visualizing a projection onto a plane. A Projection onto a Subspace is a Linear Transforma. Subspace Projection Matrix Example. Another Example of a Projection Matrix. Projection is closest vector in subspace. Least Squares Approximation. Least Squares Examples. Another Least Squares Example. Coordinates with Respect to a Basis. Change of Basis Matrix. Invertible Change of Basis Matrix. Transformation Matrix with Respect to a Basis. Alternate Basis Transformation Matrix Example. Alternate Basis Transformation Matrix Example Part 2. Changing coordinate systems to help find a transformation matrix. Introduction to Orthonormal Bases. Coordinates with respect to orthonormal bases. Projections onto subspaces with orthonormal bases. Finding projection onto subspace with orthonormal basis example. Example using orthogonal change-of-basis matrix to find transformation matrix. Orthogonal matrices preserve angles and lengths. The Gram-Schmidt Process. Gram-Schmidt Process Example. Gram-Schmidt example with 3 basis vectors. Introduction to Eigenvalues and Eigenvectors. Proof of formula for determining Eigenvalues. Example solving for the eigenvalues of a 2x2 matrix. Finding Eigenvectors and Eigenspaces example. Eigenvalues of a 3x3 matrix. Eigenvectors and Eigenspaces for a 3x3 matrix. Showing that an eigenbasis makes for good coordinate systems. Vector Triple Product Expansion (very optional). Normal vector from plane equation. Point distance to plane. Distance Between Planes.

This is a communication intensive supplement to Linear Algebra (18.06). The main emphasis is on the methods of creating rigorous and elegant proofs and presenting them clearly in writing. The course starts with the standard linear algebra syllabus and eventually develops the techniques to approach a more advanced topic: abstract root systems in a Euclidean space.

Linear Algebra: Foundations to Frontiers (LAFF) is packed full of challenging, rewarding material that is essential for mathematicians, engineers, scientists, and anyone working with large datasets. Students appreciate our unique approach to teaching linear algebra because:

- It’s visual.

- It connects hand calculations, mathematical abstractions, and computer programming.

- It illustrates the development of mathematical theory.

- It’s applicable.

In this course, you will learn all the standard topics that are taught in typical undergraduate linear algebra courses all over the world, but using our unique method, you'll also get more! LAFF was developed following the syllabus of an introductory linear algebra course at The University of Texas at Austin taught by Professor Robert van de Geijn, an expert on high performance linear algebra libraries. Through short videos, exercises, visualizations, and programming assignments, you will study Vector and Matrix Operations, Linear Transformations, Solving Systems of Equations, Vector Spaces, Linear Least-Squares, and Eigenvalues and Eigenvectors. In addition, you will get a glimpse of cutting edge research on the development of linear algebra libraries, which are used throughout computational science.

MATLAB licenses will be made available to the participants free of charge for the duration of the course.

We invite you to LAFF with us!

Foundations to Frontiers (LAFF) is packed full of challenging, rewarding material that is essential for mathematicians, engineers, scientists, and anyone working with large datasets. Students appreciate our unique approach to teaching linear algebra because:

- It’s visual.

- It connects hand calculations, mathematical abstractions, and computer programming.

- It illustrates the development of mathematical theory.

- It’s applicable.

In this course, you will learn all the standard topics that are taught in typical undergraduate linear algebra courses all over the world, but using our unique method, you'll also get more! LAFF was developed following the syllabus of an introductory linear algebra course at The University of Texas at Austin taught by Professor Robert van de Geijn, an expert on high performance linear algebra libraries. Through short videos, exercises, visualizations, and programming assignments, you will study Vector and Matrix Operations, Linear Transformations, Solving Systems of Equations, Vector Spaces, Linear Least-Squares, and Eigenvalues and Eigenvectors. In addition, you will get a glimpse of cutting edge research on the development of linear algebra libraries, which are used throughout computational science.

MATLAB licenses will be made available to the participants free of charge for the duration of the course.

This summer version of the course will be released at an accelerated pace. Each of the three releases will consist of four ”Weeks” plus an exam . There will be suggested due dates, but only the end of the course is a true deadline.

We invite you to LAFF with us!

FAQs

What is the estimated effort for the course?

About 8 hrs/week.

How much does it cost to take the course?

You can choose! Auditing the course is free. If you want to challenge yourself by earning a Verified Certificate of Achievement, the contributions start at $50.

Will the text for the videos be available?

Yes. All of our videos will have transcripts synced to the videos.

Are notes available for download?

PDF versions of our notes will be available for free download from the edX platform during the course. Compiled notes are currently available at www.ulaff.net.

Do I need to watch the videos live?

No. You watch the videos at your leisure.

Can I contact the Instructor or Teaching Assistants?

Yes, but not directly. The discussion forums are the appropriate venue for questions about the course. The instructors will monitor the discussion forums and try to respond to the most important questions; in many cases response from other students and peers will be adequate and faster.

Is this course related to a campus course of The University of Texas at Austin?

Yes. This course corresponds to the Division of Statistics and Scientific Computing titled “SDS329C: Practical Linear Algebra”, one option for satisfying the linear algebra requirement for the undergraduate degree in computer science.

Is there a certificate available for completion of this course?

Online learners who successfully complete LAFF can obtain an edX certificate. This certificate indicates that you have successfully completed the course, but does not include a grade.

Must I work every problem correctly to receive the certificate?

No, you are neither required nor expected to complete every problem.

What textbook do I need for the course?

There is no textbook. PDF versions of our notes will be available for free download from the edX platform during the course. Compiled notes are currently available at www.ulaff.net.

What are the principles by which assignment due dates are established?

There is a window of 19 days between the material release and the due date for the homework of that week. While we encourage you to complete a week’s work before the launch of the next week, we realize that life sometimes gets in the way so we have established a flexible cushion. Please don’t procrastinate. The course closes 25 May 2015. This is to give you nineteen days from the release of the final to complete the course.

Are there any special system requirements?

You may need at least 768MB of RAM memory and 2-4GB of free hard drive space. You should be able to successfully access the course using Chrome and Firefox.

This mini-course is intended for students who would like a refresher on the basics of linear algebra. The course attempts to provide the motivation for "why" linear algebra is important in addition to "what" linear algebra is. Students will learn concepts in linear algebra by applying them in computer programs. At the end of the course, you will have coded your own personal library of linear algebra functions that you can use to solve real-world problems.

We explore creating and moving between various coordinate systems. Orthogonal Complements. dim(V) + dim(orthogonal complement of V)=n. Representing vectors in Rn using subspace members. Orthogonal Complement of the Orthogonal Complement. Orthogonal Complement of the Nullspace. Unique rowspace solution to Ax=b. Rowspace Solution to Ax=b example. Projections onto Subspaces. Visualizing a projection onto a plane. A Projection onto a Subspace is a Linear Transforma. Subspace Projection Matrix Example. Another Example of a Projection Matrix. Projection is closest vector in subspace. Least Squares Approximation. Least Squares Examples. Another Least Squares Example. Coordinates with Respect to a Basis. Change of Basis Matrix. Invertible Change of Basis Matrix. Transformation Matrix with Respect to a Basis. Alternate Basis Transformation Matrix Example. Alternate Basis Transformation Matrix Example Part 2. Changing coordinate systems to help find a transformation matrix. Introduction to Orthonormal Bases. Coordinates with respect to orthonormal bases. Projections onto subspaces with orthonormal bases. Finding projection onto subspace with orthonormal basis example. Example using orthogonal change-of-basis matrix to find transformation matrix. Orthogonal matrices preserve angles and lengths. The Gram-Schmidt Process. Gram-Schmidt Process Example. Gram-Schmidt example with 3 basis vectors. Introduction to Eigenvalues and Eigenvectors. Proof of formula for determining Eigenvalues. Example solving for the eigenvalues of a 2x2 matrix. Finding Eigenvectors and Eigenspaces example. Eigenvalues of a 3x3 matrix. Eigenvectors and Eigenspaces for a 3x3 matrix. Showing that an eigenbasis makes for good coordinate systems. Orthogonal Complements. dim(V) + dim(orthogonal complement of V)=n. Representing vectors in Rn using subspace members. Orthogonal Complement of the Orthogonal Complement. Orthogonal Complement of the Nullspace. Unique rowspace solution to Ax=b. Rowspace Solution to Ax=b example. Projections onto Subspaces. Visualizing a projection onto a plane. A Projection onto a Subspace is a Linear Transforma. Subspace Projection Matrix Example. Another Example of a Projection Matrix. Projection is closest vector in subspace. Least Squares Approximation. Least Squares Examples. Another Least Squares Example. Coordinates with Respect to a Basis. Change of Basis Matrix. Invertible Change of Basis Matrix. Transformation Matrix with Respect to a Basis. Alternate Basis Transformation Matrix Example. Alternate Basis Transformation Matrix Example Part 2. Changing coordinate systems to help find a transformation matrix. Introduction to Orthonormal Bases. Coordinates with respect to orthonormal bases. Projections onto subspaces with orthonormal bases. Finding projection onto subspace with orthonormal basis example. Example using orthogonal change-of-basis matrix to find transformation matrix. Orthogonal matrices preserve angles and lengths. The Gram-Schmidt Process. Gram-Schmidt Process Example. Gram-Schmidt example with 3 basis vectors. Introduction to Eigenvalues and Eigenvectors. Proof of formula for determining Eigenvalues. Example solving for the eigenvalues of a 2x2 matrix. Finding Eigenvectors and Eigenspaces example. Eigenvalues of a 3x3 matrix. Eigenvectors and Eigenspaces for a 3x3 matrix. Showing that an eigenbasis makes for good coordinate systems.

Understanding how we can map one set of vectors to another set. Matrices used to define linear transformations. A more formal understanding of functions. Vector Transformations. Linear Transformations. Matrix Vector Products as Linear Transformations. Linear Transformations as Matrix Vector Products. Image of a subset under a transformation. im(T): Image of a Transformation. Preimage of a set. Preimage and Kernel Example. Sums and Scalar Multiples of Linear Transformations. More on Matrix Addition and Scalar Multiplication. Linear Transformation Examples: Scaling and Reflections. Linear Transformation Examples: Rotations in R2. Rotation in R3 around the X-axis. Unit Vectors. Introduction to Projections. Expressing a Projection on to a line as a Matrix Vector prod. Compositions of Linear Transformations 1. Compositions of Linear Transformations 2. Matrix Product Examples. Matrix Product Associativity. Distributive Property of Matrix Products. Introduction to the inverse of a function. Proof: Invertibility implies a unique solution to f(x)=y. Surjective (onto) and Injective (one-to-one) functions. Relating invertibility to being onto and one-to-one. Determining whether a transformation is onto. Exploring the solution set of Ax=b. Matrix condition for one-to-one trans. Simplifying conditions for invertibility. Showing that Inverses are Linear. Deriving a method for determining inverses. Example of Finding Matrix Inverse. Formula for 2x2 inverse. 3x3 Determinant. nxn Determinant. Determinants along other rows/cols. Rule of Sarrus of Determinants. Determinant when row multiplied by scalar. (correction) scalar multiplication of row. Determinant when row is added. Duplicate Row Determinant. Determinant after row operations. Upper Triangular Determinant. Simpler 4x4 determinant. Determinant and area of a parallelogram. Determinant as Scaling Factor. Transpose of a Matrix. Determinant of Transpose. Transpose of a Matrix Product. Transposes of sums and inverses. Transpose of a Vector. Rowspace and Left Nullspace. Visualizations of Left Nullspace and Rowspace. Rank(A) = Rank(transpose of A). Showing that A-transpose x A is invertible. A more formal understanding of functions. Vector Transformations. Linear Transformations. Matrix Vector Products as Linear Transformations. Linear Transformations as Matrix Vector Products. Image of a subset under a transformation. im(T): Image of a Transformation. Preimage of a set. Preimage and Kernel Example. Sums and Scalar Multiples of Linear Transformations. More on Matrix Addition and Scalar Multiplication. Linear Transformation Examples: Scaling and Reflections. Linear Transformation Examples: Rotations in R2. Rotation in R3 around the X-axis. Unit Vectors. Introduction to Projections. Expressing a Projection on to a line as a Matrix Vector prod. Compositions of Linear Transformations 1. Compositions of Linear Transformations 2. Matrix Product Examples. Matrix Product Associativity. Distributive Property of Matrix Products. Introduction to the inverse of a function. Proof: Invertibility implies a unique solution to f(x)=y. Surjective (onto) and Injective (one-to-one) functions. Relating invertibility to being onto and one-to-one. Determining whether a transformation is onto. Exploring the solution set of Ax=b. Matrix condition for one-to-one trans. Simplifying conditions for invertibility. Showing that Inverses are Linear. Deriving a method for determining inverses. Example of Finding Matrix Inverse. Formula for 2x2 inverse. 3x3 Determinant. nxn Determinant. Determinants along other rows/cols. Rule of Sarrus of Determinants. Determinant when row multiplied by scalar. (correction) scalar multiplication of row. Determinant when row is added. Duplicate Row Determinant. Determinant after row operations. Upper Triangular Determinant. Simpler 4x4 determinant. Determinant and area of a parallelogram. Determinant as Scaling Factor. Transpose of a Matrix. Determinant of Transpose. Transpose of a Matrix Product. Transposes of sums and inverses. Transpose of a Vector. Rowspace and Left Nullspace. Visualizations of Left Nullspace and Rowspace. Rank(A) = Rank(transpose of A). Showing that A-transpose x A is invertible.

Let's get our feet wet by thinking in terms of vectors and spaces. Introduction to Vectors. Vector Examples. Scaling vectors. Adding vectors. Parametric Representations of Lines. Linear Combinations and Span. Introduction to Linear Independence. More on linear independence. Span and Linear Independence Example. Linear Subspaces. Basis of a Subspace. Vector Dot Product and Vector Length. Proving Vector Dot Product Properties. Proof of the Cauchy-Schwarz Inequality. Vector Triangle Inequality. Defining the angle between vectors. Defining a plane in R3 with a point and normal vector. Cross Product Introduction. Proof: Relationship between cross product and sin of angle. Dot and Cross Product Comparison/Intuition. Vector Triple Product Expansion (very optional). Normal vector from plane equation. Point distance to plane. Distance Between Planes. Matrices: Reduced Row Echelon Form 1. Matrices: Reduced Row Echelon Form 2. Matrices: Reduced Row Echelon Form 3. Matrix Vector Products. Introduction to the Null Space of a Matrix. Null Space 2: Calculating the null space of a matrix. Null Space 3: Relation to Linear Independence. Column Space of a Matrix. Null Space and Column Space Basis. Visualizing a Column Space as a Plane in R3. Proof: Any subspace basis has same number of elements. Dimension of the Null Space or Nullity. Dimension of the Column Space or Rank. Showing relation between basis cols and pivot cols. Showing that the candidate basis does span C(A). Introduction to Vectors. Vector Examples. Scaling vectors. Adding vectors. Parametric Representations of Lines. Linear Combinations and Span. Introduction to Linear Independence. More on linear independence. Span and Linear Independence Example. Linear Subspaces. Basis of a Subspace. Vector Dot Product and Vector Length. Proving Vector Dot Product Properties. Proof of the Cauchy-Schwarz Inequality. Vector Triangle Inequality. Defining the angle between vectors. Defining a plane in R3 with a point and normal vector. Cross Product Introduction. Proof: Relationship between cross product and sin of angle. Dot and Cross Product Comparison/Intuition. Vector Triple Product Expansion (very optional). Normal vector from plane equation. Point distance to plane. Distance Between Planes. Matrices: Reduced Row Echelon Form 1. Matrices: Reduced Row Echelon Form 2. Matrices: Reduced Row Echelon Form 3. Matrix Vector Products. Introduction to the Null Space of a Matrix. Null Space 2: Calculating the null space of a matrix. Null Space 3: Relation to Linear Independence. Column Space of a Matrix. Null Space and Column Space Basis. Visualizing a Column Space as a Plane in R3. Proof: Any subspace basis has same number of elements. Dimension of the Null Space or Nullity. Dimension of the Column Space or Rank. Showing relation between basis cols and pivot cols. Showing that the candidate basis does span C(A).

The course is an introduction to linear and discrete optimization - an important part of computational mathematics with a wide range of applications in many areas of everyday life.

This course will cover the very basic ideas in optimization. Topics include the basic theory and algorithms behind linear and integer linear programming along with some of the important applications. We will also explore the theory of convex polyhedra using linear programming.

Learn the analysis of circuits including resistors, capacitors, and inductors. This course is directed towards people who are in science or engineering fields outside of the disciplines of electrical or computer engineering.

Phenomena as diverse as the motion of the planets, the spread of a disease, and the oscillations of a suspension bridge are governed by differential equations. This course is an introduction to the mathematical theory of ordinary differential equations and follows a modern dynamical systems approach. In particular, equations are analyzed using qualitative, numerical, and if possible, symbolic techniques.

MATH226 is essentially the edX equivalent of MA226; a one-semester course in ordinary differential equations taken by more than 500 students per year at Boston University. It is divided into three parts. MATH226.2x is the second part.

For additional information on obtaining credit through the ACE Alternative Credit Project, please visit here.

This course provides students with the basic analytical and computational tools of linear partial differential equations (PDEs) for practical applications in science engineering, including heat / diffusion, wave, and Poisson equations. Analytics emphasize the viewpoint of linear algebra and the analogy with finite matrix problems. Numerics focus on finite-difference and finite-element techniques to reduce PDEs to matrix problems. The Julia Language (a free, open-source environment) is introduced and used in homework for simple examples.

This course is a study of speech sounds: how we produce and perceive them and their acoustic properties. It explores the influence of the production and perception systems on phonological patterns and sound change. Acoustic analysis and experimental techniques are also discussed.

This course studies the development of bilingualism in human history (from Australopithecus to present day). It focuses on linguistic aspects of bilingualism; models of bilingualism and language acquisition; competence versus performance; effects of bilingualism on other domains of human cognition; brain imaging studies; early versus late bilingualism; opportunities to observe and conduct original research; and implications for educational policies among others. The course is taught in English.

This course studies the development of bilingualism in human history (from Australopithecus to present day). It focuses on linguistic aspects of bilingualism; models of bilingualism and language acquisition; competence versus performance; effects of bilingualism on other domains of human cognition; brain imaging studies; early versus late bilingualism; opportunities to observe and conduct original research; and implications for educational policies among others. The course is taught in English.

This course is a detailed examination of the grammar of Japanese and its structure which is significantly different from English, with special emphasis on problems of interest in the study of linguistic universals. Data from a broad group of languages is studied for comparison with Japanese. This course assumes familiarity with linguistic theory.

Trusted paper writing service WriteMyPaper.Today will write the papers of any difficulty.